My research sits at the intersection of AI4Math, AI4Science, and open-source scientific infrastructure. On the formal reasoning side, I lead efforts in our lab on AI for mathematics and theorem proving in Lean, and I maintain the LeanDojo website for our broader theorem-proving ecosystem. On the scientific machine learning side, I work on neural operators, PDE learning, and reliable ML systems for scientific discovery.

These directions are closely connected: the long-term goal is to build machine learning systems that are not only powerful, but also interpretable, verifiable, and usable in real scientific workflows. In practice, that means combining formal reasoning tools, theorem proving, program verification, and neural operator methods into a shared research agenda for trustworthy AI in mathematics and science.

AI4Math

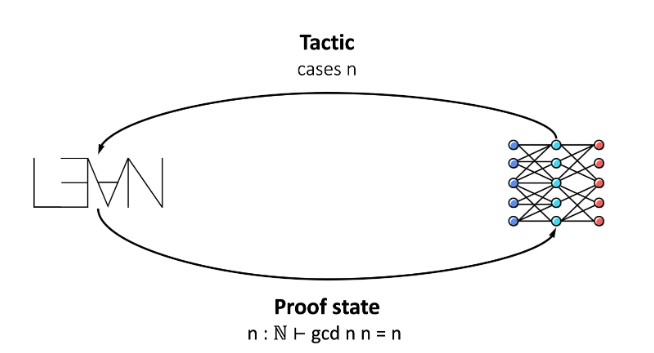

My AI4Math work focuses on combining large language models with formal proof assistants, especially Lean, to make theorem proving and formalization more scalable and more useful for mathematicians. I lead and contribute to projects that span proof search, interactive theorem proving, verification, and mathematical discovery.

- LeanCopilot: using language models to autocomplete tactics and suggest proof steps inside Lean.

- LeanAgent: lifelong learning for theorem proving across repositories and mathematical domains.

- LeanDojo: infrastructure for interacting with Lean programmatically, extracting proof data, and building AI-assisted theorem proving systems.

- LeanProgress: a proof progress model for guiding search in neural theorem proving.

- TorchLean and related verification projects: bringing neural networks, program verification, and formal reasoning into the Lean ecosystem.

AI4Science

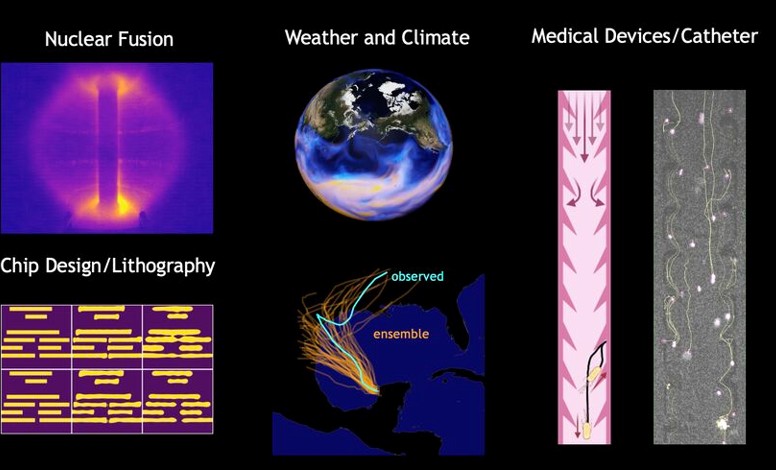

My AI4Science work centers on neural operators and operator learning for large-scale scientific machine learning. This includes building models for PDEs, designing architectures that generalize across discretizations, and developing scalable training methods for real scientific problems.

- NeuralOperator: open-source infrastructure for learning mappings between function spaces.

- Fourier Neural Operator: spectral operator-learning methods for parametric PDEs.

- Physics-informed neural operators: incorporating physical structure and constraints into operator learning.

- CoDA-NO: pretraining codomain-attention neural operators for multiphysics PDEs.

Open-Source and Research Infrastructure

An important part of my work is building and maintaining research infrastructure that other people can use. I regularly contribute to open-source repositories across theorem proving, formalization, and scientific machine learning, including:

- LeanDojo: infrastructure for AI-assisted theorem proving in Lean.

- DeepMind Formal Conjectures: formalized mathematical conjectures in Lean.

- CSLib: the Lean computer science library.

- NeuralOperator: the main open-source library for neural operator research.

Together, these efforts reflect a single vision: connect formal reasoning, verification, and scientific machine learning so that future AI systems can help discover mathematics, reason about programs, and solve scientific problems with stronger guarantees and better tooling.